NYEX AI: A Local-First Model Agnostic Agentic AI Engineering Platform with Persistent Memory.

Build apps, automate workflows, and power intelligent systems – from email & marketing automation, customer service bots to systems diagnostics and full software development lifecycle – use your own model, your own data, and your own infrastructure.

Shaping the Future of Intelligence

Our vision is to become a leading global provider of AI-powered chatbot solutions, offering innovative technologies that reshape how businesses connect with customers.

The Team Powering Innovation

We are an AI startup focused on building secure and scalable solutions that transform how businesses operate and grow.

What Makes Us Different

We stand out by offering customized AI models, seamless integrations, and enterprise-grade security trusted by startups and enterprises alike.

Our Mission & Vision

WHY TEAMS CHOOSE NYEX AI

NYEX AI is built to work across frontier and local models, so teams are not locked into one model generation. As AI capabilities, pricing, and policies change, teams can switch or combine models without rebuilding their workflows. This keeps your operations stable even when the AI market shifts quickly.

NYEX AI starts where most teams hesitate: security and control. It runs inside your own environment, under your own policies, so sensitive code, customer tickets, and operational logs stay in your device.

Teams see value quickly because NYEX AI supports the full workflow, not just one task. It helps with ideation, business cases, architecture, engineering, testing, security checks, and diagnostics in one sprint.

Unlike short-term chat tools, NYEX AI keeps a persistent memory of decisions, fixes, and patterns. Teams stop repeating the same investigations and start from what they already learned. Engineers spend less time rediscovering old answers and more time solving new problems, so delivery quality and speed improve together

Built-in governance, access controls, and audit trails make AI usage measurable and manageable. Management can still answer critical questions: who used what, for which purpose, and with what result. Engineers keep speed, while management gets confidence and accountability. This balance builds trust across technical, security, and executive stakeholders.

NYEX AI helps existing teams deliver more without immediate hiring pressure. By automating repeatable work across planning, coding, testing, and diagnostics, it reduces time spent on low-leverage tasks and frees experts to focus on high-value decisions. This creates a durable moat: faster execution, lower delivery cost per project, and stronger margins as workload grows. Instead of scaling primarily through added headcount, teams scale through better systems and compounding operational efficiency.

Use frontier LLM for complex tasks and local open source LLM for routine tasks.

As data is saved locally, it eliminates leakage of proprietary data.

Knowledge persists across sessions with inspectable memory entries.

Higher productivity, innovate faster and deliver more with the same team.

AI Model Agnostic

5X Productivity

Enterprise 24/7 Support

AI Model Agnostic

5X Productivity

Enterprise 24/7 Support

Get Started in Minutes

Register in minutes and experience the power of NYEX to transform your team’s productivity exponentially, allowing you to innovate and scale faster and deliver more at a rapid phase.

Strategic AI Roadmap Development

Seamless AI Integration and Intelligent Automation

Tailored AI Solutions for Industry Challenges

Workflow Automation Made Smarter

Advanced AI Analytics for Insights

Generative AI Tools for Content

- Download NodeJS: https://nodejs.org/en/download

- Subscribe to your preferred LLM: GPT or Anthropic or Gemini

- Download Local LLM (Optional): : https://ollama.com/download/windows

- Open Terminal (Powershell on Windows) and install via NPM.

- Install latest version: npm install -g @nyex/nyex.

- To launch NYEX AI type: nyex.

- On NYEX Terminal UI.

- Follow Registration Onboarding and subscribe to NYEX.

- Select preferred LLM /providers and /model.

Learn the NYEX AI Platform

Use Your Own LLM Subscription. Keep Your Data and Memory. Own Your AI Agents. Control Your Costs.

FREE (First 3 Months FREE)

Deploy locally, use your own LLM and keep your data local. Experience the power of NYEX AI.

- NYEX Al Core Infrastructure

- 1 Account

- Foundation Multi Model Routing

- Local Model Stack

- Role Based Orchestration Agents

- Persistent Local Memory

GO

Deploy your Al agents locally and experience how a digital workforce can 5X your productivity and scale faster

- NYEX Al Core Infrastructure

- 1 Account

- Foundation Model Routing

- Local Model Stack

- Role Based Orchestration Agents

- Persistent Local Memory

PRO

Optimized for individual devs, Independent consultant, Attorney Compliance specialist, Creator, Technical founder.

- NYEX Al Core Infrastructure

- Up to 2 Accounts

- Foundation Model Routing

- Local Model Stack

- Role Based Orchestration Agents

- Persistent Local Memory

- Model Context Protocol

- Tools & HTTP Connectors

- Extension and Plugins

- Email Support

- Unlimited

TEAM

Optimized for product teams, Revenue-generating agency, Technical consultancy, Fast-scaling startup, Professional services firm

- NYEX Al Core Infrastructure

- Up to 6 Accounts

- Foundation Model Routing

- Local Model Stack

- Role Based Orchestration Agents

- Persistent Local Memory

- Tool Bus Engine

- MCP Studio Adapter, HTTP Transport Inbound & Outbound

- Priority Feature Update

- Policy As Code

- Extension and Plugins

- Email Support (Priority)

- Unlimited

ENTERPRISE

Optimized for enterprise transformation and adoption, compliance, quality gates, deployment controls, SLAs.

- NYEX Al Core Infrastructure

- Enterprise SSO Accounts

- Digital Transformation with Al Infrastructure

- Foundation Model Routing

- Local Model Stack

- Role Based Orchestration Agents

- Persistent Local Memory

- OpenBrain Enterprise Shared Memory Layer

- Tool Bus Engine

- MCP Studio Adapter, HTTP Transport Inbound & Outbound

- RBAC - Role based access control (Advanced)

- Policy As Code (Advanced Governance)

- Governance As Architecture

- Email Support (Priority)

- Hypercare Enterprise Support SLA (Contractual SLA)

- Dedicated Success Engineer

AI Questions & Answers

Explore common questions to better understand how our AI services work, their benefits, and how they can be tailored to your business needs.

Does any of our data get sent to your servers?

If you connect to a foundation LLM (like OpenAI, Anthropic, Gemini, etc.), data is only sent to that provider — under your own subscription and policies — not through us.

We do not host, store, or process your engineering data in the cloud.

How does token cost reduction actually work?

● Routine or structured tasks run on a local on-prem LLM.

● Higher-quality foundation models are used only when necessary.

● Persistent vector memory ensures only relevant context is retrieved, reducing unnecessary token load.

You use your own LLM subscriptions, so you only pay for what you consume — and the system minimizes unnecessary external calls.

What does “persistent memory” mean in practice?

Our platform stores structured memory in a local vector database so that:

● Knowledge persists across runs

● Context improves over time

● Memory entries can be inspected, edited, approved, or deprecated

● Workflows become stable and repeatable

This turns AI from “chat sessions” into a governed engineering system.

Is this tied to a specific LLM provider?

You can:

● Use OpenAI, Anthropic, Gemini or other foundation models

● Use a small on-prem LLM for local processing

● Switch providers without rebuilding your workflows

This prevents vendor lock-in and keeps your architecture flexible.

Is this suitable for enterprise or regulated environments?

Because data and memory are stored locally, the platform supports:

● Stronger data control

● Reduced cloud exposure risk

● Air-gapped deployment options

● Policy enforcement and audit tracing

● SSO and role-based access control (Enterprise tier)

This significantly simplifies enterprise security reviews.

Do we need a large engineering team to use this?

The platform is designed to reduce manual orchestration and repetitive AI setup.

It includes:

● Reusable workflows

● Built-in evaluation and replay

● Automated routing between models

● Governance and audit logging

Small teams can deliver production-grade AI systems without building complex infrastructure from scratch.

What kind of hardware is required to run the local LLM?

For small on-prem LLMs used for routine processing, a modern machine with sufficient RAM is typically adequate.

Foundation models still run through your existing cloud subscription when higher-quality output is required.

You can scale your local model capacity based on your needs.

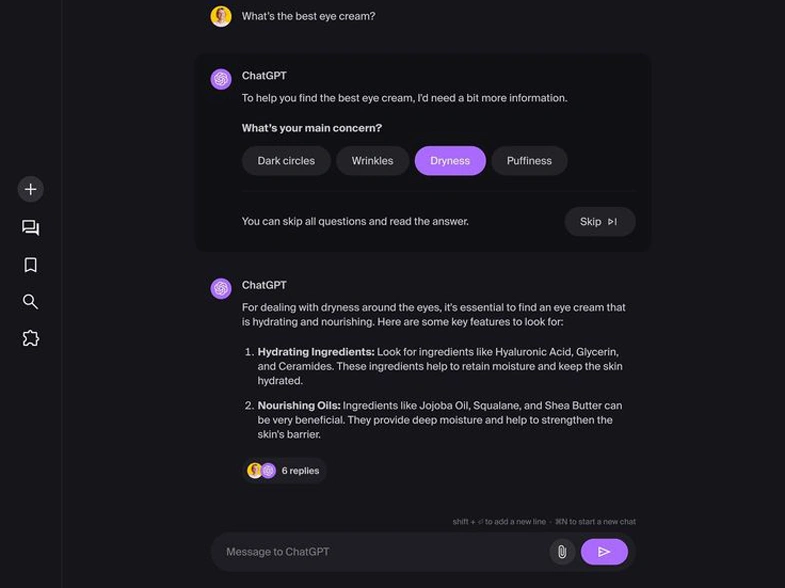

How is this different from using prompts in ChatGPT or other AI tools?

This platform is built for engineering systems:

● Persistent memory

● Inspectable vector storage

● Replayable executions

● Governance and policy controls

● Multi-step workflow orchestration

● Enterprise auditability

It moves AI from experimentation to structured, repeatable, and governed production workflows.

How Does Licensing Work If Everything Runs Locally?

a. License / Subscription for NYEX (The Platform)

This license gives you the right to use the NYEX AI Engineering Platform itself.

What the NYEX license controls:

● Access to the platform software

● Feature tiers (Free / Pro / Team / Enterprise)

● Governance capabilities (policy engine, approvals, audit exports)

● Collaboration features (multi-user workspaces)

● Enterprise controls (SSO, RBAC, compliance modules)

● Certified builds and enterprise distribution (if applicable)

● Software updates and support

What the NYEX license does NOT include:

● LLM token usage

● Foundation model subscription

● Cloud AI costs

● Local hardware costs

● The NYEX license governs the engineering infrastructure layer, not the AI compute layer.

b. License / Subscription for the LLM Provider

This is completely separate.

You bring your own:

● OpenAI subscription

● Anthropic subscription

● Gemini subscription

● Or any other foundation model provider

You pay those providers directly for:

● Token usage

● Model access

● API calls

● Any cloud compute involved

NYEX does not resell tokens and does not add markup to your LLM costs.

This means:

● You pay only for what you consume.

● You maintain full control over your LLM billing.

● You can switch providers without changing your NYEX license.

c. Local LLM Usage

If you use a small on-prem LLM:

You do not pay per token.

Costs are limited to your own hardware.

NYEX simply orchestrates and routes tasks to it.

NYEX provides the orchestration and governance layer — not the model license.

Why This Separation Matters

1. Cost Transparency

You see exactly what you’re paying for:

● Platform capability (NYEX)

● AI compute usage (LLM provider)

2. No Vendor Lock-in

You can:

● Change model providers

● Adjust usage patterns

● Control token consumption

Without affecting your NYEX license.

3. Enterprise Procurement Simplicity

Enterprises often:

● Already have LLM provider agreements

● Already have cloud contracts

● Already have model governance policies

NYEX integrates into those existing agreements rather than replacing them.

Hear from Our Partners

Jane Dawson

Integrating the AI chatbot into our platform reduced our support workload by over 40%. It handles routine queries efficiently, ensures accuracy in responses, and frees our team to focus on innovation.

Emily Carter

Before working with [AI Startup], managing our workflows was time-consuming and costly. With their AI-powered automation platform, we managed to reduce operational costs by nearly 25% within just three months.

Michael Johnson

Our decision criteria were clear: scalability and seamless integration. [AI Startup] delivered both, integrating flawlessly with our operations and accelerating our development timeline by months.